Salt State and Pillar Architecture

Introduction¶

This document defines the architecture and operational model for deploying and maintaining Windows and Linux virtual machines using Aria Automation integrated with Salt.

The objective of this design is to:

- Standardise how configuration is applied to all virtual machines.

- Enforce strict separation between environments (dev, test, prod).

- Provide a repeatable promotion model for state and configuration changes.

- Centralise implementation logic in version-controlled Git repositories.

- Ensure secrets are managed securely.

- Allow role-based configuration to be selected at request time in Aria Automation.

- Keep the orchestration layer stable as the estate grows.

Aria Automation acts as the provisioning and orchestration initiator. Salt provides configuration enforcement. Git repositories provide the authoritative source of state logic and configuration data.

This architecture ensures that:

- Every virtual machine is built from a single, predictable entry point (

bringup/init.sls). - Environment isolation is enforced at the Git branch level.

- Role intent is passed from Aria via custom grains.

- Configuration data and secrets are stored in a separate pillar repository.

- All changes follow a controlled promotion path from dev to test to prod.

The remainder of this document describes:

- The core architectural principles

- Repository and branching strategy

- Environment mapping and Salt configuration

- Role selection and metadata flow

- Promotion and rollback procedures

- Secrets management

- Operational workflows

1) Core design¶

1.1 Design Principles¶

This implementation follows these architectural principles:

- Idempotent – All states must be safe to re-run.

- Data-driven – Desired state is driven by metadata (grains/pillar), not hard coded logic.

- Environment-isolated – Dev/Test/Prod separation is enforced via Git branches (GitFS + git pillar).

- Single entry point – All deployments begin with

bringup/init.sls. - Composable roles – Capabilities are added via role states, not by changing orchestration.

- Separation of concerns – Grains describe what the node is, pillar provides configuration data for roles/profiles.

This ensures predictable deployments, controlled promotion, and simplified maintenance at scale.

1.2 Single Entry State: bringup/init.sls¶

Each VM applies a single orchestration state. Triggered by Aria Automation during provisioning, and safe to re-run for day-2 configuration.

salt 'some-minion' state.apply bringup saltenv=dev

This state:

- Contains no implementation logic

- Contains no package installation logic

- Contains no OS configuration directly

- Performs orchestration only via

include

It pulls in:

- Common baseline configuration

- Environment-specific overlay

- OS-specific baseline

- Role-specific configuration

This guarantees:

- A stable orchestration layer

- Minimal branching logic

- Clear separation of responsibilities

1.3 State Layer Responsibilities¶

A. profiles.common¶

Applies configuration common to all systems:

-

Time sync

-

Logging baseline

-

Core security settings

-

Standard users/groups

-

Base monitoring agents

-

Corporate certificates

This file must not contain environment or role-specific logic. The intent is to keep profiles.common stable; avoid

rapid churn here and put change-heavy config into roles.

B. env/init.sls¶

Environment-specific overlay.

Because GitFS uses one branch per environment:

-

devbranch → dev environment states -

testbranch → test environment states -

prodbranch → prod environment states

We add this to our bringup via an include block:

include:

- env

This will automatically resolve to the env/init.sls file within the active branch.

Importantly each environment branch must contain env/init.sls for this to work.

This avoids runtime environment switching logic inside states.

C. OS Profiles¶

Split by OS family:

-

profiles.linux -

profiles.windows

These files may:

- Branch further by OS version

- Apply OS baseline hardening

- Configure package managers

- Install OS-specific agents

OS branching must remain inside profile files, not scattered throughout roles. If a role applies to both OS types, keep

the role name the same and branch internally (or split into roles/<role>/linux.sls + roles/<role>/windows.sls).

D. Roles¶

Roles represent application capabilities or system functions.

Examples:

roles.monitoringroles.sibelroles.nginxroles.iisroles.sql

Roles are:

- Modular

- Independent

- Reusable

- Driven by role intent metadata (grains) and role configuration (pillar)

Adding a new role requires:

- Creating the role state file

- Adding the role name to pillar

- Making the role selectable in Aria (so it sets the grain value)

Importantly no modification to bring-up logic is required.

1.4 Metadata and Configuration Sources¶

Aria Automation defines VM intent. Role intent is passed to Salt via grains. Pillar provides configuration values consumed by roles/profiles.

Minimum recommended structure:

profile: rhel9_server

tags:

- hardened

repo_urls:

internal_yum: http://repo/dev

Recommended responsibilities of pillar:

- Defines what the VM should be

- Defines what capabilities are applied

- Contains environment-specific data (URLs, endpoints, patch schedules)

- Contains sensitive data where appropriate

Pillar must not contain implementation logic.

1.5 Branching Philosophy¶

All branching must occur at controlled points:

- OS branching → inside profiles

- Role branching → via grains (role list), expanded in

bringup/init.sls - Environment branching → via Git branch (saltenv)

- Feature toggles → via tags in pillar

Branching must never be:

- Hardcoded deep inside roles

- Driven by hostname patterns

- Driven by ad-hoc grain values that aren’t set by Aria / not documented.

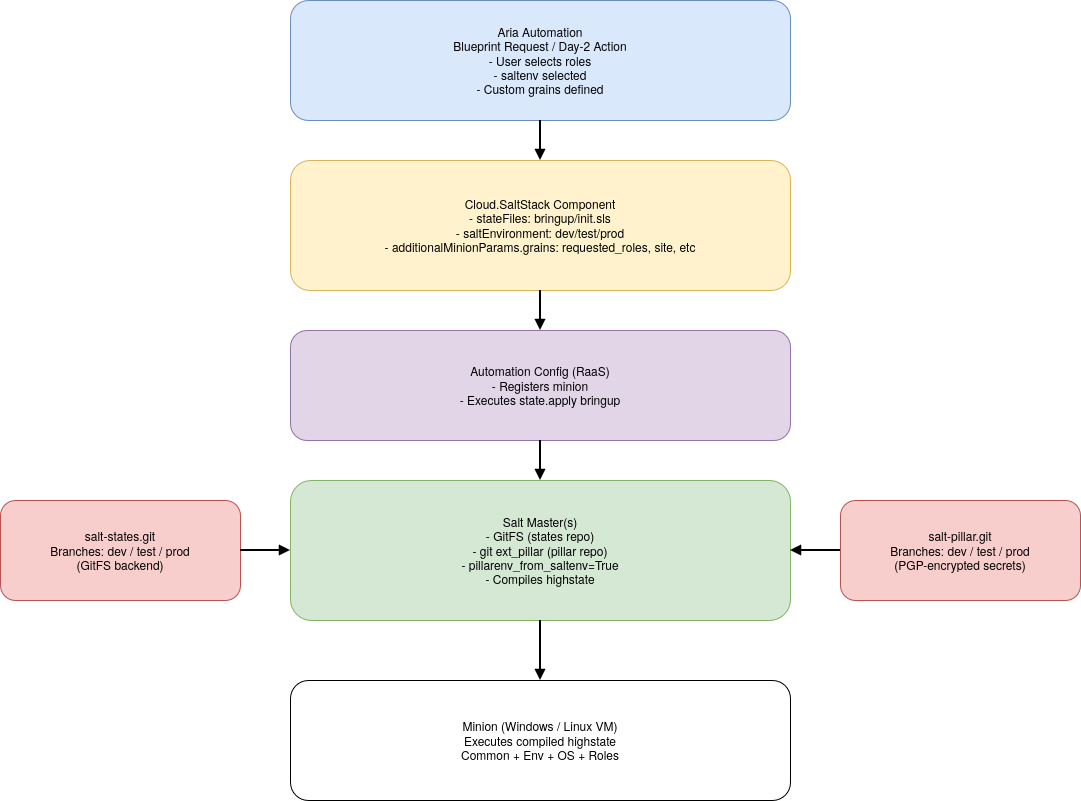

1.6 High level data flow diagram¶

This diagram illustrates:

- Aria Automation as the initiator of configuration.

- The Cloud.SaltStack component passing:

saltenv- Custom grains (role intent + metadata)

- The

bringup/init.slsentrypoint - Automation Config (RaaS) orchestrating execution.

- Salt master resolving:

- State files from GitFS (states repo)

- Pillar data from git ext_pillar (pillar repo, including PGP-encrypted secrets)

- The master compiling the final highstate sent to the minion.

Environment isolation occurs at Git branch level.

Role selection occurs via grains.

Configuration values and secrets are sourced from pillar.

2) Repositories and Environment Model¶

This section defines how configuration logic and configuration data are structured, versioned, isolated, and promoted across environments.

The repository model establishes the platform’s single source of truth and enforces environment separation structurally rather than conditionally.

2.1 Separation of Logic and Data¶

This architecture uses two independent Git repositories to enforce separation of concerns.

2.1.1 State Repository¶

Example: salt-states.git

Purpose: Configuration logic (how systems are configured)

Contains:

bringup/profiles/roles/env/- Templates, map files, reusable modules

This repository defines:

How configuration is applied.

It must not contain:

- Environment-specific secrets

- Plaintext credentials

- Business configuration values that belong in pillar

2.1.2 Pillar Repository¶

Example: salt-pillar.git

Purpose: Configuration data (what values are applied)

Contains:

- Environment-specific configuration values

- Role configuration parameters (URLs, versions, feature flags)

- Site / region configuration

- Approved role lists (optional governance control)

- Secrets (PGP-encrypted)

This repository defines:

What configuration values are consumed by states.

It must not contain:

- Orchestration logic

- Role expansion logic

- Implementation logic that belongs in states

No orchestration logic exists in the pillar repository.

No secrets exist in the state repository.

This separation reduces blast radius of change and enforces clean ownership boundaries.

2.2 Environment Branch Model¶

Both repositories use identical environment branches:

dev

test

prod

Each branch represents a fully isolated environment boundary.

There is no shared base branch serving multiple environments.

Each branch must be:

- Self-contained

- Independently deployable

- Free of cross-environment references

- Complete with required directory structure (including

env/init.sls)

Environment isolation exists exclusively at the Git branch level.

2.3 Environment Mapping¶

Environment selection is controlled by saltenv.

| Git Branch | saltenv | pillarenv |

|---|---|---|

| dev | dev | dev |

| test | test | test |

| prod | prod | prod |

The Salt master is configured with:

pillarenv_from_saltenv: True

This guarantees:

- State and pillar environments remain aligned.

- Cross-environment mismatches are prevented.

- Environment resolution occurs before state compilation.

- No runtime environment switching is required inside state files.

Environment selection is controlled externally (Aria Automation or operator command), not dynamically inside state logic.

2.4 How Salt Resolves State and Pillar¶

When a state run is executed:

salt '<target>' state.apply bringup saltenv=test

Salt performs:

- Load state files from

salt-states.gitbranchtest - Load pillar data from

salt-pillar.gitbranchtest - Compile pillar (including encrypted values)

- Execute

bringup/init.slsfrom that branch

All environment isolation is enforced structurally by branch selection.

No environment branching occurs inside state files.

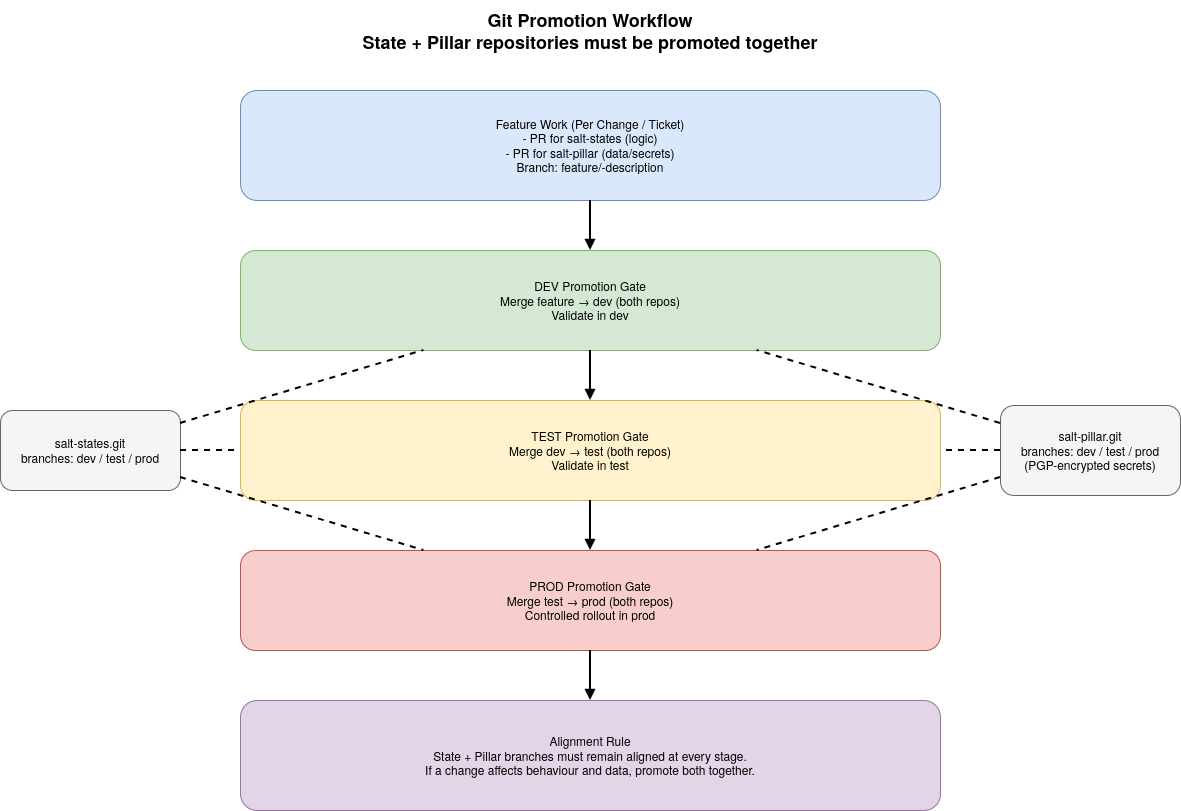

2.5 Promotion Model¶

Changes are promoted through environments using controlled Git merges.

Promotion path:

dev → test → prod

Promotion applies equally to both repositories:

- State repository

- Pillar repository

A change is considered promoted only when:

-

State changes are merged to the target branch

-

Corresponding pillar changes are merged to the same branch

Promotion must:

-

Represent exactly what was validated in the previous environment

-

Not introduce additional modifications

-

Be traceable to an approved change or ticket

State and Pillar branches must remain aligned at every stage of promotion.

Promotion of one repository without the other is not permitted when behavioural coupling exists.

2.6 Branch Protection and Governance¶

Recommended controls:

- Require Pull Requests for merges into

testandprod - Prevent direct commits to

prod - Use ticket IDs in PR titles

- Keep feature work out of environment branches

Recommended workflow:

- Create feature branch:

feature/<ticket>-description

- Merge into

dev - Validate in

dev - Promote

dev → test - Validate in

test - Promote

test → prod

Promotion PRs must represent the exact code and configuration validated in the prior environment.

2.7 Secrets Management (PGP-Encrypted Pillar)¶

The pillar repository contains sensitive configuration values such as:

- Active Directory join credentials

- Service account passwords

- API keys

- Private endpoints

- Certificates or tokens

Sensitive values must not be stored in plaintext.

Encryption Model¶

Secrets are encrypted prior to commit using Salt’s PGP renderer.

Encrypted values are stored in the appropriate environment branch.

Salt decrypts the value during pillar compilation using the master’s private key.

Plaintext secrets are never stored in Git.

Environment Separation of Secrets¶

Each environment branch must contain:

- Only secrets relevant to that environment

- Separate credentials for dev/test/prod

- No production credentials in lower environments

Shared credentials across environments are not permitted.

Secret changes follow the same dev → test → prod promotion discipline.

Implementation specifics of encryption and key management are outside the scope of this document.

2.8 Rollback Strategy¶

Rollback is performed at the Git level.

Options include:

- Reverting specific commits

- Resetting a branch to a previous tag

- Merging a corrective change

Once reverted, re-running bringup converges systems to the reverted configuration state.

There is no manual “state rollback” mechanism outside of Git control.

2.9 Architectural Guarantees¶

This repository and environment model guarantees:

- Deterministic configuration per environment

- Structural environment isolation

- Alignment between state and pillar data

- Controlled promotion across environments

- Secure handling of sensitive values

- Clear audit trail of change

All configuration behaviour is reproducible from repository content and branch selection.

3. Runtime Execution Model¶

This section describes how Aria Automation and Salt interact at runtime to produce the final configuration state executed on a virtual machine.

It focuses on execution flow rather than repository structure or promotion controls.

3.1 Provisioning Trigger¶

The runtime process begins when a user submits a request in Aria Automation.

The request includes:

- Target environment (

dev,test, orprod) - One or more selected roles

- Site or classification metadata (as applicable)

Aria provisions the virtual machine and invokes the Cloud.SaltStack component as part of the deployment process.

Aria acts as:

- The initiator of configuration

- The source of role intent

- The selector of environment (

saltenv)

3.2 Metadata Injection¶

At provisioning time, Aria passes metadata to the minion via:

saltEnvironment(maps tosaltenv)- Custom grains (e.g.

requested_roles,site,classification)

Grains describe the identity and intent of the node.

This metadata becomes available to Salt during state compilation.

No role logic is embedded inside Aria templates beyond setting metadata.

3.3 State Execution Entry Point¶

All configuration begins with a single orchestration state:

bringup/init.sls

This file is the only state invoked directly.

It does not contain implementation logic.

Instead, it composes the final configuration by including:

- Common baseline states

- Environment overlay

- OS-specific profile

- Role states based on metadata

This guarantees a predictable and repeatable configuration path for every system.

3.4 Environment Resolution¶

When execution begins:

- The selected

saltenvdetermines which state branch is used. - Pillar environment aligns automatically.

- The master loads the appropriate state and pillar trees.

Environment selection occurs before state compilation.

There is no dynamic environment switching inside state files.

3.5 Highstate Compilation¶

The Salt master compiles the highstate in the following conceptual stages:

- Load state tree from the selected branch.

- Load pillar data from the aligned branch.

- Decrypt any encrypted pillar values.

- Evaluate Jinja and render state files.

- Expand includes defined in

bringup/init.sls. - Resolve dependencies and ordering.

The result is a fully compiled highstate specific to:

- Environment

- Operating system

- Selected roles

- Node metadata

3.6 Minion Execution¶

The compiled highstate is transmitted to the minion.

The minion:

- Executes the ordered list of states

- Enforces idempotent configuration

- Reports results back to the master

Re-running the same state produces the same end state unless configuration inputs or custom grains have changed.

3.7 Deterministic Outcome¶

The runtime model guarantees:

- The same inputs produce the same configuration outcome.

- Environment boundaries are enforced structurally.

- Role selection affects only included role states.

- Configuration data is resolved at compile time.

- Secrets are decrypted only on the master.

All behaviour is driven by:

- Git branch selection

- Metadata provided at provisioning

- Repository content

There is no hidden runtime logic outside these controls.

4. Role Selection & Metadata Model¶

This section defines how role intent is expressed, propagated, and enforced within the platform.

4.1 Role Intent Source¶

Role intent originates in Aria Automation.

When requesting a virtual machine, a user selects one or more roles appropriate to the workload.

Examples:

iisnginxendpoint_protection(e.g. CrowdStrike - Falcon)sqlad

Role names represent functional capability, not specific vendor products.

Role selection is part of the provisioning request and is treated as declarative intent.

4.2 Metadata Injection via Grains¶

Selected roles are passed to the minion as custom grains.

Recommended model:

requested_roles:

- iis

- monitoring

Additional grains may include:

siteclassificationpatch_group- Feature flags

Grains define what the node is intended to be.

They do not contain configuration values or secrets.

4.3 Role Expansion in bringup¶

bringup/init.sls reads the requested_roles grain and dynamically includes matching role states.

Conceptually:

- For each value in

requested_roles - Include

roles.<role_name>

If a referenced role state does not exist, compilation must fail.

This mechanism ensures:

- Adding a new role requires only a new state file.

- No modification to bringup logic is required.

- Role selection scales without structural changes.

4.4 Role Naming Conventions¶

To maintain consistency:

- Role names must match the directory or state file name.

- Role names must be lowercase.

- Role names must not contain environment identifiers.

- Role names must not encode OS type.

Examples:

Correct:

iisnginxmonitoring

Incorrect:

prod_iiswindows_iisiis_dev

OS-specific behaviour should be handled inside role states or OS profiles, not in role names.

4.5 Role vs Profile¶

Roles represent capabilities.

Profiles represent system baselines.

Profiles:

- Applied automatically based on OS.

- Define system-level defaults and standards.

Roles:

- Applied based on user-selected intent.

- Define workload-specific configuration.

Roles must not duplicate profile responsibilities.

4.6 Governance Controls¶

To maintain stability and prevent misuse:

- Only approved roles may be selectable in Aria.

- Role names must correspond to existing state definitions.

- Unsupported or unknown roles must not silently succeed.

- Role removal (removing a grain value) must be treated as configuration drift and handled intentionally.

Optional governance model:

- Maintain an approved roles list in pillar.

- Validate requested roles during state compilation.

4.7 Custom Grain Modification¶

Custom grains are part of the configuration input surface.

Re-running bringup produces the same end state unless:

- Configuration data has changed, or

- Custom grains have changed.

If a user modifies requested_roles directly on a minion and re-runs configuration, the resulting highstate may differ.

Therefore:

- Grain modification must be governed.

- Aria remains the authoritative source of role intent.

- Manual grain changes should be avoided in production environments.

4.8 Architectural Guarantees¶

This model ensures:

- Role selection is declarative.

- Roles are modular and independently maintainable.

- Adding roles does not require orchestration changes.

- Runtime logic remains centralized in

bringup/init.sls. - Environment isolation remains unaffected by role selection.

5. Configuration Data & Secret Model¶

This section defines how configuration data is structured and where different types of information must reside.

It establishes clear boundaries between:

- State logic

- Pillar data

- Grains (node metadata)

5.1 Separation of Concerns¶

The architecture enforces strict separation between:

| Component | Responsibility |

|---|---|

| States | Define configuration logic and enforcement behaviour |

| Pillar | Provide configuration values and secrets |

| Grains | Describe node identity and role intent |

Blurring these boundaries introduces drift and unpredictability.

5.2 What Belongs in State Files¶

State files define:

- Packages to install

- Services to enable

- Files to manage

- Templates to render

- System configuration enforcement

State files must:

- Be environment-agnostic

- Not contain hardcoded environment-specific values

- Not contain plaintext secrets

- Not embed business-specific configuration data

States describe how to configure something, not what values to use.

5.3 What Belongs in Pillar¶

Pillar provides configuration values consumed by states.

Examples:

- Application configuration values

- URLs and repository endpoints

- Version numbers

- Feature flags

- Site-specific settings

- Credentials (encrypted)

Pillar data may vary between environments.

Pillar is compiled at runtime and merged per minion.

Pillar must not:

- Contain orchestration logic

- Contain role expansion logic

- Duplicate logic found in states

Pillar answers the question:

What values should be applied?

5.4 What Belongs in Grains¶

Grains describe node identity and declarative intent.

Examples:

requested_rolessiteclassificationOS_family(native grain)

Grains must not:

- Contain secrets

- Contain configuration values

- Contain environment logic

Grains answer the question:

What is this node supposed to be?

5.5 Secret Handling Principles¶

Sensitive values are stored in pillar only.

Architectural controls:

- Secrets must be encrypted before commit.

- Secrets must never be stored in plaintext in Git.

- Secrets must never be embedded in state files.

- Secrets must never be passed via grains.

- Each environment branch must contain only its own credentials.

Decryption occurs on the master during pillar compilation.

Implementation specifics of encryption and key management are outside the scope of this document.

5.6 Template Rendering Model¶

When states render configuration files:

- Templates pull values from pillar.

- Templates must not reference environment names directly.

- Templates must not include conditional logic that bypasses environment isolation.

Templates should consume pillar data consistently across environments.

5.7 Change Impact Model¶

Changing:

- A state file modifies configuration behaviour.

- A pillar value modifies configuration inputs.

- A grain value modifies role or identity intent.

Re-running bringup produces the same end state unless configuration inputs or custom grains have changed.

This ensures predictable and explainable configuration outcomes.

5.8 Anti-Patterns¶

The following are not permitted:

- Hardcoding passwords in state files

- Embedding environment URLs directly in states

- Using grains to store secrets

- Using pillar to determine which roles to include

- Embedding business logic inside templates

- Duplicating configuration values in multiple repositories

5.9 Architectural Guarantees¶

This model guarantees:

- Clear ownership of logic vs data.

- Secure handling of sensitive values.

- Predictable environment behaviour.

- Scalable role expansion.

- Reduced risk of configuration drift.

6. Promotion & Change Model¶

This section defines how configuration changes are introduced, validated, promoted, and, if required, rolled back across environments.

It applies to both State and Pillar repositories.

6.1 Change Types¶

Changes fall into three categories:

-

State Logic Changes

- New role

- Modification to existing role

- OS baseline change

- Template modification

-

Configuration Data Changes

- Version updates

- Endpoint changes

- Feature flag adjustments

- Secret rotation

-

Metadata Model Changes

- New supported role

- Role naming updates

- New grain definitions

Each change type must follow the same promotion discipline.

6.2 Change Introduction¶

All changes must originate from a git feature branch.

Example flow:

feature/<ticket>-description

Feature branches must not target test or prod directly.

Changes are first merged into dev.

6.3 Environment Promotion Flow¶

Promotion path:

dev → test → prod

Promotion applies equally to the State and Pillar repositories.

Promotion must:

- Represent exactly what was validated in the previous environment

- Not introduce additional modifications

- Be traceable to an approved change or ticket

State and Pillar branches must remain aligned at every stage of promotion.

6.4 Validation Expectations¶

Each promotion stage requires validation appropriate to the environment:

Dev¶

- Functional validation

- Role expansion validation

- Template rendering validation

Test¶

- Integration validation

- Environment-specific configuration validation

- Secret resolution validation

Prod¶

- Controlled rollout

- Targeted execution

- Monitoring and verification

The validation model must scale with system criticality.

6.5 Rollback Model¶

Rollback is performed at the Git level.

Options include:

- Reverting specific commits

- Resetting to a previous tag

- Merging a corrective change

Once reverted, re-running bringup will converge systems to the reverted configuration state.

There is no separate state rollback mechanism outside of Git control.

6.6 Change Coupling Between Repositories¶

Changes affecting behaviour often require updates in both repositories.

Examples:

- Adding a new role:

- State repository: create

roles/<role>.sls - Pillar repository: add configuration values (if required)

- Rotating a secret:

- Pillar repository only

Both repositories must follow the same promotion cadence to maintain alignment. Promotion of one repository without the other is not permitted when behavioural coupling exists.

6.7 Governance Controls¶

To preserve environment integrity:

- Pull Requests required for

testandprod. - Direct commits to

prodare not permitted. - All changes must reference an approved ticket.

- Emergency changes must still follow post-change review and merge discipline.

Promotion must remain controlled and auditable.

6.8 Drift Prevention¶

Configuration drift is minimized by:

- Centralised Git source of truth

- Idempotent state execution

- Controlled promotion pipeline

- Avoidance of manual production changes

Manual modification of:

- Minion grains

- Production pillar branches

- State logic outside Git

introduces drift and must be avoided.

6.9 Architectural Guarantees¶

The promotion model ensures:

- Deterministic movement of change through environments.

- Clear audit trail of configuration evolution.

- Reduced risk of environment divergence.

- Controlled secret rotation.

- Repeatable rollback capability.

7. Platform Controls & Execution Safeguards¶

This section defines the platform-level controls required to ensure deterministic and predictable configuration behaviour.

These controls apply regardless of whether the platform is deployed with a single master or multiple masters.

7.1 Deterministic Environment Selection¶

Environment selection must always be explicit.

saltenvmust be specified during execution.- State and pillar environments must remain aligned.

- No implicit or default environment switching is permitted.

Environment behaviour must be structurally enforced, not inferred.

7.2 Centralised Source of Truth¶

The platform must operate exclusively from Git-managed repositories.

- State logic must originate from the state repository.

- Configuration data must originate from the pillar repository.

- Local state or pillar overrides outside Git are not permitted.

All configuration behaviour must be reproducible from repository content.

7.3 Metadata Governance¶

Custom grains are part of the configuration input surface.

Controls:

- Role intent must originate from Aria Automation.

- Manual grain modification in production environments must be avoided.

- Grains must not contain secrets.

- Unsupported or invalid roles must not silently succeed.

Metadata changes directly affect configuration outcome and must be governed.

7.4 Secret Handling Controls¶

Secrets must:

- Reside only in the pillar repository.

- Be encrypted before commit.

- Be decrypted only during compilation.

- Be isolated per environment branch.

Secrets must never:

- Appear in state files.

- Be passed via grains.

- Be embedded in Aria templates.

7.5 Execution Predictability¶

Re-running bringup must produce the same end state unless:

- Configuration inputs have changed, or

- Custom grains have changed.

All configuration outcomes must be explainable based on:

- Git branch selection

- Repository content

- Node metadata

There must be no hidden runtime behaviour.

7.6 Drift Prevention¶

The architecture prevents drift through:

- Idempotent state design

- Centralised Git source of truth

- Controlled promotion workflow

- Explicit environment selection

Drift can occur if:

- Manual configuration is applied outside Salt.

- Production branches are edited directly.

- Grains are modified without governance.

- Secrets are injected outside pillar.

Such actions violate the architectural model.

7.7 Architectural Guarantees¶

When platform controls are respected, the architecture guarantees:

- Predictable configuration behaviour.

- Environment isolation enforced structurally.

- Clear traceability of change.

- Secure handling of sensitive data.

- Scalable role expansion without orchestration changes.

8. Operational Guardrails & Anti-Patterns¶

This section defines practices that are not permitted within this architecture.

These guardrails protect environment isolation, configuration determinism, and security posture.

8.1 Environment Guardrails¶

The following are not permitted:

- Conditional environment switching inside state files.

- Hardcoded references to

dev,test, orprodwithin states. - Cross-environment resource references.

- Running configuration without explicitly selecting

saltenv.

Environment isolation must exist at the Git branch level only.

8.2 Repository Guardrails¶

The following are not permitted:

- Direct commits to production branches.

- Manual edits to repository content outside approved change workflow.

- Maintaining separate, undocumented configuration sources.

- Divergence between State and Pillar branch alignment.

All configuration behaviour must originate from version-controlled repositories.

8.3 Secret Handling Guardrails¶

The following are not permitted:

- Plaintext secrets committed to Git.

- Secrets embedded in state files.

- Secrets stored in grains.

- Secrets passed via Aria metadata.

Sensitive values must reside only in encrypted pillar data.

8.4 Metadata Guardrails¶

The following are not permitted:

- Unsupported or undefined roles.

- Role names that encode environment or OS.

- Manual modification of production grains without governance.

- Using grains to store configuration data or secrets.

Grains represent node identity and intent only.

8.5 State Design Guardrails¶

The following are not permitted:

- Embedding business logic inside templates.

- Hardcoding configuration values that belong in pillar.

- Duplicating configuration values across multiple repositories.

- Allowing state files to silently succeed when required configuration is missing.

States must remain modular, idempotent, and data-driven.

8.6 Drift Guardrails¶

The following introduce configuration drift and violate architectural intent:

- Manual changes applied directly to systems outside Salt.

- Editing configuration on a minion without updating Git.

- Modifying custom grains outside Aria control.

- Skipping promotion stages.

All systems must converge from the same source of truth.

8.7 Architectural Integrity¶

If any of the above guardrails are violated, the following risks are introduced:

- Environment contamination

- Secret exposure

- Non-deterministic configuration

- Untraceable configuration drift

- Promotion breakdown

Adherence to these guardrails preserves the integrity of the architecture.

Appendices¶

Appendix A — bringup/init.sls¶

A clean simple entry point for all deployments, that branches based on OS family and then expands on roles.

# salt://bringup/init.sls

{% set osfam = grains.get('os_family', '') %}

{% set roles = grains.get('requested_roles', []) %}

include:

- profiles.common

- env

{% if osfam in ['RedHat', 'Debian', 'Suse'] %}

- profiles.linux

{% elif osfam == 'Windows' %}

- profiles.windows

{% endif %}

{# Simple role fan-out (no nested validation loops) #}

{% for r in roles %}

- roles.{{ r|lower }}

{% endfor %}

Appendix B - profiles/common.sls¶

This keeps common items truly common and data-driven via pillar.

# salt://profiles/common.sls

{% set ntp_server = salt.pillar.get('common:ntp_server', 'pool.ntp.org') %}

{% set dns_servers = salt.pillar.get('common:dns_servers', []) %}

# --- Time sync (generic, OS-specific details handled in OS profiles if needed) ---

common_ntp_server_note:

test.show_notification:

- text: "NTP server for this node is {{ ntp_server }}"

# --- DNS intent as pillar, applied in OS profiles (Linux/Windows do actual enforcement) ---

common_dns_intent_note:

test.show_notification:

- text: "DNS servers from pillar: {{ dns_servers }}"

Matching pillar data:

# pillar/common.sls

common:

ntp_server: "pool.ntp.org"

dns_servers:

- "10.0.0.10"

- "10.0.0.11"

Appedix C - profiles/linux.sls¶

Example assumes RHEL-like systems (dnf/yum). It’s still safe on other Linux families if you branch later, but this is intentionally “clean and useful” for a starting point.

# salt://profiles/linux.sls

{% set default_repo_baseurl = 'http://repo.example.internal/rhel/$releasever/os/$basearch' %}

{% set repo_baseurl = salt.pillar.get('linux:repos:baseurl', default_repo_baseurl) %}

{% set ntp_server = salt.pillar.get('common:ntp_server', 'pool.ntp.org') %}

{% set dns_servers = salt.pillar.get('common:dns_servers', ['10.0.0.10', '10.0.0.11']) %}

# --- Repo configuration ---

linux_base_repo:

pkgrepo.managed:

- name: baseos

- humanname: BaseOS

- baseurl: {{ repo_baseurl }}

- enabled: 1

- gpgcheck: 0

# --- Time sync (chrony) ---

chrony_pkg:

pkg.installed:

- name: chrony

chrony_conf:

file.managed:

- name: /etc/chrony.conf

- contents: |

server {{ ntp_server }} iburst

driftfile /var/lib/chrony/drift

makestep 1.0 3

rtcsync

- require:

- pkg: chrony_pkg

chronyd_service:

service.running:

- name: chronyd

- enable: True

- watch:

- file: chrony_conf

# --- DNS (systemd-resolved example; adapt if you standardise NetworkManager instead) ---

systemd_resolved_pkg:

pkg.installed:

- name: systemd

resolved_conf:

file.managed:

- name: /etc/systemd/resolved.conf

- contents: |

[Resolve]

DNS={{ ' '.join(dns_servers) }}

- require:

- pkg: systemd_resolved_pkg

systemd_resolved_service:

service.running:

- name: systemd-resolved

- enable: True

- watch:

- file: resolved_conf

Matching pillar data:

# pillar/linux.sls

linux:

repos:

baseurl: "http://repo.example.internal/rhel/$releasever/os/$basearch"

Appedix D - profiles/windows.sls¶

This enforces time sync (W32Time) and DNS servers on a chosen interface alias from pillar.

# salt://profiles/windows.sls

{% set ntp_server = salt.pillar.get('common:ntp_server', 'time.windows.com') %}

{% set dns_servers = salt.pillar.get('common:dns_servers', ['10.0.0.10', '10.0.0.11']) %}

{% set iface_alias = salt.pillar.get('windows:primary_interface', 'Ethernet') %}

# --- Time sync (W32Time) ---

w32time_service:

service.running:

- name: W32Time

- enable: True

set_ntp_server:

cmd.run:

- name: w32tm /config /manualpeerlist:"{{ ntp_server }}" /syncfromflags:manual /reliable:yes /update

- shell: cmd

- require:

- service: w32time_service

resync_time:

cmd.run:

- name: w32tm /resync

- shell: cmd

- require:

- cmd: set_ntp_server

# --- DNS configuration (PowerShell) ---

set_dns_servers:

cmd.run:

- name: >-

powershell -NoProfile -ExecutionPolicy Bypass -Command

"Set-DnsClientServerAddress -InterfaceAlias '{{ iface_alias }}' -ServerAddresses {{ dns_servers | tojson }}"

- shell: cmd

Pillar data:

# pillar/windows.sls

windows:

primary_interface: "Ethernet"

Appedix E - roles/nginx.sls¶

Installs nginx, ensures service, drops a simple site config. Values come from pillar.

# salt://roles/nginx.sls

{% set listen_port = salt.pillar.get('nginx:listen_port', 80) %}

{% set server_name = salt.pillar.get('nginx:server_name', 'localhost') %}

nginx_pkg:

pkg.installed:

- name: nginx

nginx_site_conf:

file.managed:

- name: /etc/nginx/conf.d/default.conf

- contents: |

server {

listen {{ listen_port }};

server_name {{ server_name }};

location / {

return 200 "nginx role applied\n";

}

}

- require:

- pkg: nginx_pkg

nginx_service:

service.running:

- name: nginx

- enable: True

- watch:

- file: nginx_site_conf

Pillar data:

# pillar/nginx.sls

nginx:

listen_port: 80

server_name: "nginx.example.internal"

Appedix F - roles/iis.sls¶

Installs IIS, ensures W3SVC, and drops a basic index page.

# salt://roles/iis.sls

iis_features:

win_feature.installed:

- name:

- Web-Server

- Web-Default-Doc

- Web-Static-Content

- Web-Http-Errors

- Web-Http-Logging

w3svc_service:

service.running:

- name: W3SVC

- enable: True

- require:

- win_feature: iis_features

iis_index:

file.managed:

- name: C:\inetpub\wwwroot\index.html

- contents: |

<html>

<body>

<h1>IIS role applied</h1>

</body>

</html>

- require:

- win_feature: iis_features

Appedix G - Example salt-master config for GitFS + git_pillar using dev/test/prod branches¶

G.1 /etc/salt/master.d/gitfs.conf¶

fileserver_backend:

- gitfs

gitfs_provider: pygit2

gitfs_remotes:

- https://git.example.internal/salt/salt-states.git:

- name: salt-states

- all_saltenvs: false

- saltenv:

- dev

- test

- prod

# Optional: keep salt from falling back to a "base" env

gitfs_base: ""

G.2 /etc/salt/master.d/pillar-git.conf¶

pillarenv_from_saltenv: True

ext_pillar:

- git:

- https://git.example.internal/salt/salt-pillar.git:

- name: salt-pillar

- env:

- dev

- test

- prod

G.3 pillar/top.sls - Pillar top file¶

This top file will be the same in all 3 branches

# pillar/top.sls

base:

'*':

- common

- bringup

'G@os_family:Windows':

- windows

'G@os_family:RedHat or G@os_family:Debian or G@os_family:Suse':

- linux

'G@requested_roles:nginx':

- nginx

'G@requested_roles:iis':

- iis

G.4 /etc/salt/master.d/roots-disable.conf¶

If you want GitFS-only (no local roots) then add the following config:

file_roots: {}

pillar_roots: {}